HashiCorp + Multi-cloud

The rising popularity of the cloud has fundamentally changed the way that IT administrators manage infrastructure and applications. We're still in the early innings of enterprise cloud adoption. The strides made in the last ten years have given admins power at their fingertips, but often at a cognitive operational cost.

Let's use GDPR constraints as an example. You're a SaaS provider based in San Francisco with customers in both the US and Europe. Naturally when you first built and shipped your product, you launched in a datacenter located in the US. But due to constraints imposed by the European Union's General Data Protection Regulation, personally identifiable information about customers based in the EU can't physically be transferred out of the region under certain circumstances. Your customers have begun to require that you also deploy your product in a data center in Europe where their information can reside.

Now, you can be glad that it's 2021 and you're operating in the cloud. Your cloud provider has data centers in Europe that you can take advantage of, but there are still questions around the best process to spin up new virtual infrastructure, network it all together, and deploy your applications on it.

How can you ensure that the new infrastructure is identical to the old?

How is networking affected if you decide to change instance types and require new machines?

Are there any errors in the ad-hoc scripts you wrote to create resources?

The HashiCorp Open-source Suite

HashiCorp is an infrastructure management software company founded in 2012 that builds open-source tools for the cloud-era. Its products solve exactly the kind of problem described above, allowing users to manage infrastructure with simple and repeatable processes.

The key cloud trend that created a product opportunity for HashiCorp is that the cloud has made infrastructure dynamic in nature, whereas it was previously static. Enterprises no longer provision their own resources with the intention of keeping them around on a 5+ year lifecycle, but rather virtually spin resources up and down on a daily basis.

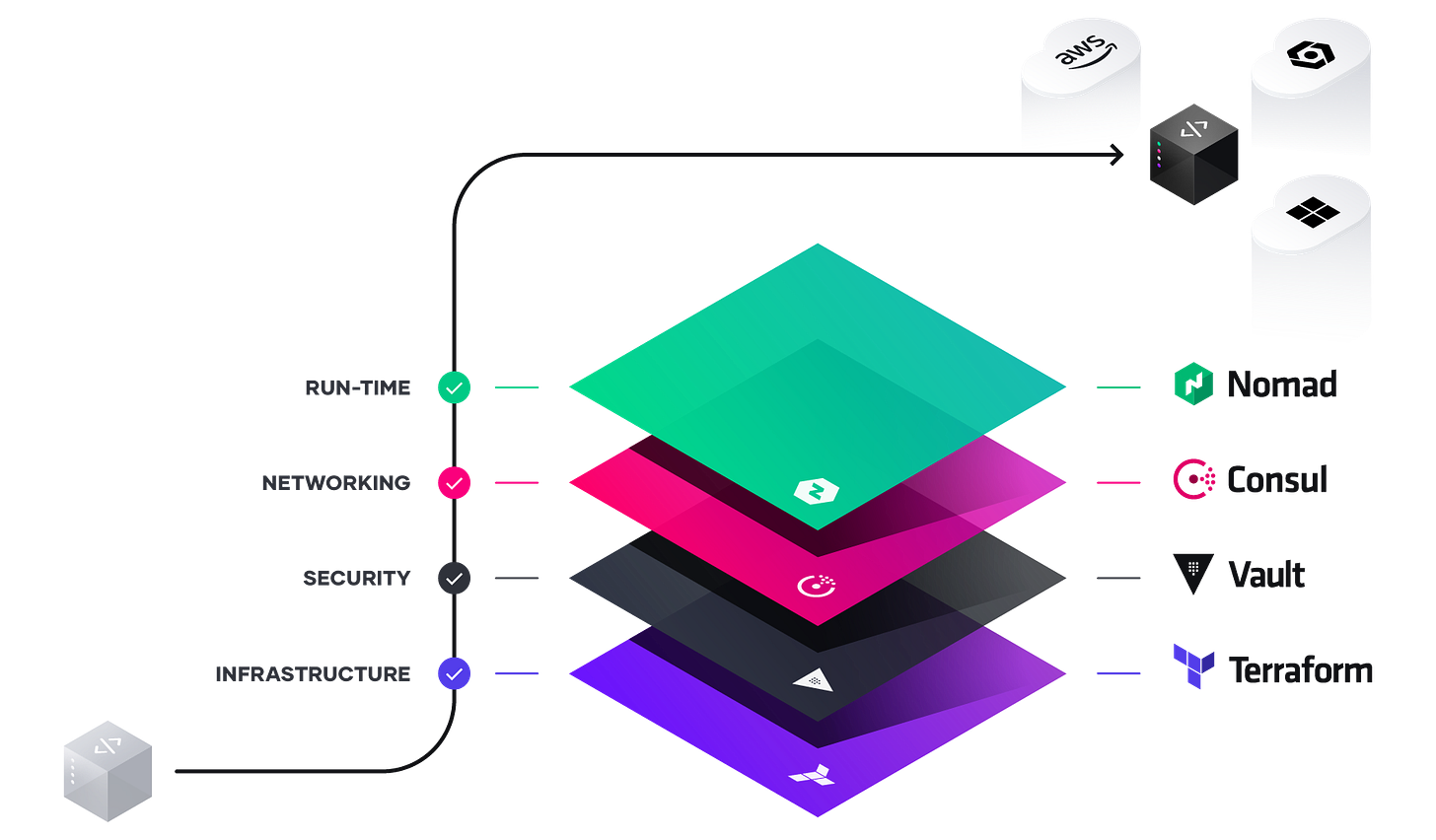

Whether it’s deploying applications across regions (e.g. US west coast and US east coast), across cloud providers (e.g. AWS and Microsoft Azure), or in a hybrid cloud model (private and public clouds), HashiCorp’s tools provide a consistent experience for the operator. HashiCorp’s suite is composed of four core products (among a few other less prominent tools as well):

Terraform (infrastructure)

Vault (security)

Consul (networking)

Nomad (orchestration)

I will briefly touch on what each of these four products accomplishes, before giving a more macro analysis of cloud trends acting as tailwinds for the products.

Terraform — Multi-Cloud Infrastructure

You'll notice I've put a redundant "multi-cloud" in the title of each of the next four sections, next to the name of HashiCorp's four core products. HashiCorp does the same thing on its landing page, showing that a key piece of the company's product messaging is cloud agnosticism, but I'll get more into that later when talking about higher-level cloud trends.

Terraform has helped to popularize the infrastructure as code paradigm, in which infrastructure requirements and definition are provided via static machine-readable files. Application source code development has long made use of control mechanisms like versioning, peer review, and security audit — infrastructure as code brings the same concepts to the infra world. A basic terraform config may look something like this:

- Server a

- Instance type: t2.micro

- ami: "ami-abcd1234"

- Server b

- Instance type: t3.micro

- ami: "ami-efgh5678"

- Private network a

- cidr_block: "10.0.0.0/16" With this file (and many others) containing the base set of resources required to run your applications, you can connect it to any terraform-enabled cloud provider (any major cloud provider), check the file against everything that is currently provisioned, create any missing resources, and delete any superfluous ones.

The ability to define infrastructure needs once and provision them with a consistent process is hugely powerful when working with multiple deployments.

Vault — Multi-Cloud Security

The rise of dynamic infrastructure has also changed a lot about application security and how companies build security into their production systems. With static infrastructure, administrators took a classic "castle and moat" approach to security with heavy trust between devices contained within a well-defined perimeter and little trust to devices on the outside. Static infrastructure allowed for statically defined IP addresses to be placed on pre-defined allow lists from which requests should be trusted.

In dynamic environments, security perimeters can't always be clearly defined, static IPs certainly aren't as relevant, and services adopt more of a "zero-trust" security model. To learn more about zero-trust and perimeter-less security, see my article on Cloudflare and SASE.

At its core, Vault is a CLI tool like other HashiCorp products. The two main security functions that if offers are storage and encryption for secrets and identity-based access. The secret storage tool provides a single, centralized authentication store that all deployments can take advantage of. Intuitively this makes securing multi-cloud or multi-region deployments more simple.

With a simple command like the following, you can get up and running with Vault and start storing application secrets in whatever persistent storage layer you choose to configure:

$ vault kv put key/path database_password=<my_password>

On the identity-based access side, Vault integrates with many common identity providers like AWS IAM or Okta SSO to create trusted entities and roles that applications can assume to define trust relationships. These entities can be defined with static configuration files, similar to those used in terraform.

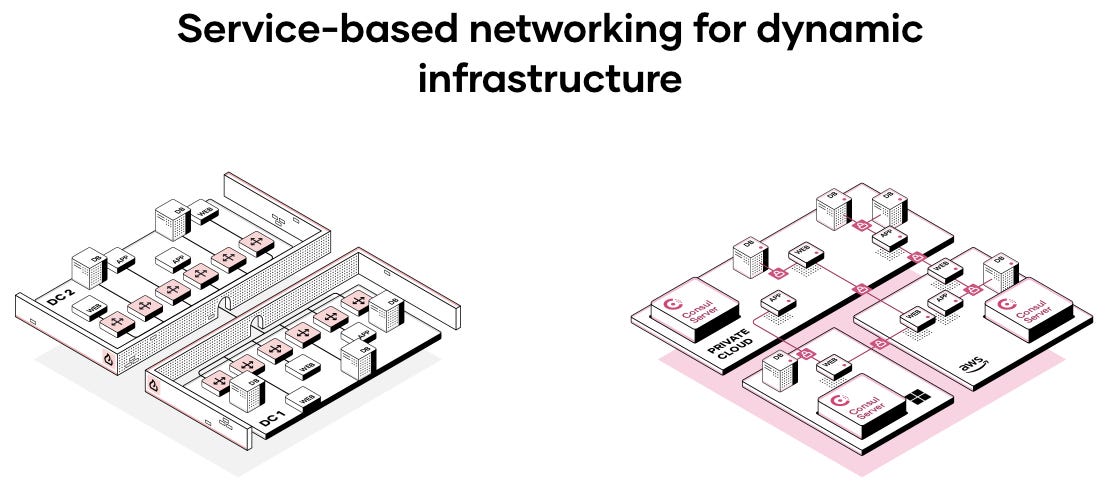

Consul — Multi-Cloud Networking

On HashiCorp's website, Consul is positioned as "the single control plane for cloud networks." In the prior world of static infrastructure, connectivity between services was created with static definitions. Complex firewall combinations were used to restrict access between services which should not be able to communicate.

But in the world of dynamic infrastructure, application networking is defined in terms of services and identities, rather than literal hardware. Similar to Vault, Consul depends heavily on zero-trust security in using these identities. Consul plays nicely on top of kubernetes as a networking layer, but also with other orchestration tools (including Nomad, to be explained below).

Nomad — Multi-Cloud Orchestration

Nomad is an open-source application orchestration tool. Orchestration tools are the business logic layer of application deployments. They take care of deploying new versions of services without downtime, automatically scaling services horizontally when load increases or decreases, and spinning up new replicas of services when existing replicas fail. Orchestration is a fundamental component of software development used to ensure high reliability.

With static infrastructure, hardware was provisioned on a per application basis. If you needed to deploy a user metadata access service backed by a relational database, you would determine what type of hardware and how much of it is necessary to run the application ahead of time. With dynamic infrastructure and orchestration tools like Nomad, hardware is more homogeneous and less application-specific, providing added flexibility to developers. Orchestration tools help to schedule services on this relatively arbitrary hardware.

The HashiCorp stack is now complete. Using HashiCorp's open-source tools, one can build infrastructure, secure and network applications, and orchestrate deployments.

Cloud Tailwinds for HashiCorp Tools

It's important to note that HashiCorp products can be used in on-premises environments as well. However this ability has primarily been developed in order to give consistency to customers who started using HashiCorp in the cloud, but still have legacy deployments.

In any case, these products were built for cloud era, and three key cloud trends are what created a product opportunity for HashiCorp. The trends are the following:

Dynamic over static infrastructure

Disaggregation of workload from cloud provider

Rise of multi-deployment architectures

Dynamic over Static Infrastructure

This has been partially covered in each of the above sections because it is difficult to describe HashiCorp's products without talking about dynamic infrastructure, but it is worth explaining in a bit more detail here.

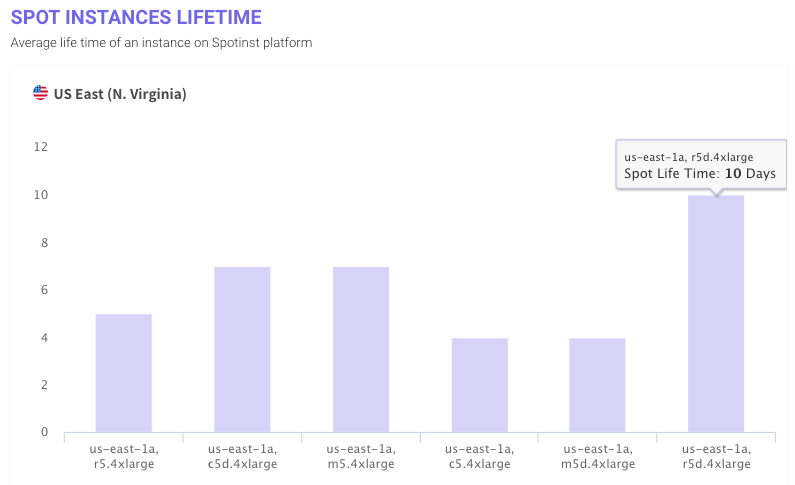

AWS EC2 is one of AWS' most popular services. With it, customers can rent compute nodes from Amazon data centers and pay at an hourly rate (EC2 stands for Elastic Compute Cloud). A common way to pay for this service is with spot instance pricing, in which customers bid in ongoing auctions for extra compute instance capacity that AWS hasn't sold to pre-paying customers. The graph below shows the average amount of time that customers rent spot instances in the AWS US East region:

The sampled customers rent spot instances on average for four to ten days before releasing them back to AWS’ pool of extra capacity. This short-term usage pattern highlights in one sense what HashiCorp means by dynamic infrastructure. One wouldn’t want to repeatedly rent and release cloud compute resources without a simple and repeatable process to create instances and tear them down. Even harder to imagine is renting physical compute in the pre-cloud era, shipping it to your on-prem data center, setting it up, using it for four days, and then sending it back.

Disaggregation of Workload from Cloud Provider

Big cloud is an interesting business because the players work to provide a differentiated offering with a product that by nature customers want to be homogeneous. Customers want to rent compute and storage with whatever specs they request (num. cores, gigahertz, GB ram, etc.). They want these resources to made available to them exactly as specified, and they want them to be infinitely horizontally scalable to the point of seeming homogeneous. But of course the differentiation between cloud providers is in whichever can provide homogeneous resources at the best price, provide the best developer experience, and include the most bells and whistles with the offering.

The point is that customers try to measure their workloads, make an educated guess about the resources that will be needed to support the workload, and at that point it shouldn't matter if it's 20 machines from AWS, or Azure, or GCP, or whoever. The disaggregation of workload from cloud provider supports what HashiCorp is building because the products are meant to work equally well no matter the public cloud in which they're being run.

Rise of Multi-deployment Architectures

With the global reach of today's IT services, it's increasingly common for enterprises to deploy their applications to multiple public and private clouds simultaneously. 90% of publicly traded companies have some form of a multi-cloud strategy, and 80% have a strategy that includes hybrid cloud. With these complicated configurations, about 40% of companies use tools specifically to aid with multi-cloud management. HashiCorp's products are included in this category.1

Very similar to how the disaggregation of workload from cloud provider has contributed to the popularity of HashiCorp products, multi-deployment architectures increase complexity and thus require a set of tools to make processes consistent and repeatable between deployments. HashiCorp products are aided by yet another cloud tailwind.

Though HashiCorp has not yet filed for IPO, many analysts expect it some time soon. The company has raised a total of $349M in funding from top firms such as IVP, Redpoint, and GGV Capital. It will be exciting to continue to track the company's progress over the next few months.