Liqid Composable Infrastructure

Engineers at my stage in their career cut their teeth in the cloud era. I've been building software, academically and professionally, for about eight years now, and yet I've never done anything that remotely resembles racking a server machine to host an application. And even without doing so, I've built data ingestion pipelines, web apps, and mobile games among other things.

With such a bias towards thinking of software purely in cloud terms, one may forget the importance of enterprise data centers. Even as of 2020, only an estimated 20% of enterprise workloads were running in public clouds. The same report estimated that by 2023 that number may reach 40%, still leaving a majority in private clouds.1

Moving to the cloud has proven to be generally advantageous, but still carries a few of the following risks and costs:

Switching or migration costs in the form of engineering staff and time

Security implications inherent in distributed IT architecture

Total cost of ownership (TCO) can be higher if poorly executed

Take the second concern, for example. There are two main reasons that cloud-based IT architectures increase security concerns over legacy, on-premises architectures.

Individual SaaS apps store corporate data and are not colocated, generally speaking. Eg: Salesforce stores your data in AWS, while Marketo stores it in Azure.

Workforce distribution requires perimeter-less service access, which is more complicated to administer.

So we've established that many corporations still run their workloads in private data centers, and that for a few reasons this will not change overnight. Enterprises with fixed investment in these data centers thus have every reason to squeeze as much out of them as possible. They want to run these data centers with the efficiency and flexibility that are found in public clouds.

Liqid Composable Infrastructure

I had the opportunity last month to sit down with Bryan Schramm, one of the founders of Liqid. Liqid builds a software platform that enables composable infrastructure. Composable infrastructure brings the power and efficiency of cloud computing to the private data center. The beauty of the cloud is that you can get exactly the types of machines you need, when you need them and pay for nothing else.

With composable infrastructure, one thinks of their private data center as pools of independently scalable computing resources. You have a pool of X CPU's, a pool of Y storage drives, and a pool of Z networking switches. You can then combine resources from these pools to create server machines on the fly.

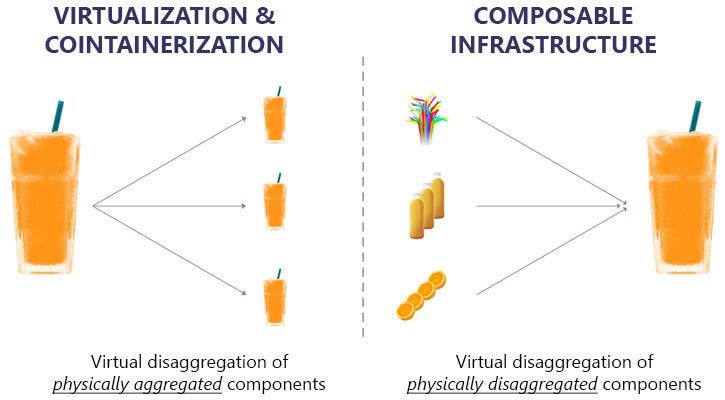

When I first learned of this concept, I struggled to distinguish it from virtualization, which has been standard industry practice since the late 90's and early 2000's. Virtualization and composable infrastructure are essentially each other's inverse. Virtualization creates the appearance of multiple machines out of a single machine via an additional software layer on top of the operating system. Composable infrastructure inserts software control lower in the stack to aggregate the hardware components themselves.

The difference between the two can be described using glasses of orange juice as an example. Virtualization takes a single, complete glass of juice, and gives the appearance of multiple smaller glasses, each of those also containing a complete set of components.

With composable infrastructure, you draw from collections of components to create a single glass of juice. The advantage with this approach is that you can create what you need when you need it.

The two main advantages of composing servers on demand are (1) better resource utilization and (2) faster application deployment. Liqid has found that the typical enterprise data center has only 12-18% utilization. This means that a large majority of hardware resources are not being actively used at any given time (while they are depreciating!). In a world of static infrastructure, this over-provisioning is sensible as you need to have many machines on standby to be able to handle peak load. Using Liqid composable infrastructure, utilization can double or even triple, greatly driving down hardware costs.

Composable infrastructure allows for faster data center and application deployment partly because reduced hardware requirements reduce the amount of time needed to stand up the data center, but also because the underlying hardware choice is not as crucial when there's flexibility to switch out hardware at a later time if needed.

Virtualization can (and often should) be used in tandem with composable infrastructure. Liqid integrates with industry standards like Kubernetes to allow virtualized environments on top of the bare metal servers that have been dynamically composed via the Liqid fabric. As another example, this article explains how Liqid can be used to compose VMware host machines in seconds, showing the power of composable infrastructure and virtualization in tandem.

Liqid Elements

The Liqid product suite is composed of a few distinct elements which are deployed to the data center. First there's Liqid Matrix, which is the management software that automates the dynamic composition of computer systems from pools of bare metal elements. Along with the software "brains", the product suite also has multiple hardware components.

The fabric is the PCIe switch that server elements are physically connected to which allows for server composition. One option of fabric is the Liqid Grid 48 Port PCIe Gen 4 Fabric Switch, as shown below:

Before I interviewed Bryan, I wondered why it's necessary to deploy a physical box to the data center as part of the Liqid solution. His core answer to this was latency. Most Liqid deployments are in high performance AI environments. Colocating the fabric that composes server machines with those machines has a latency advantage over hosting that composition software outside of the data center. In high performance AI environments, even a slight increase in latency can result in hours or days of increased total runtime.

Liqid Fabric is built with off-the-shelf PCIe switches. PCIe stands for Peripheral Component Interconnect Express. This is essentially a computer bus that connects hardware components together to facilitate data transfer. When connecting peripheral components over PCIe, that point of interconnect must also be attached via PCIe to achieve low latency, which requires that the switch be local to the data center.

Liqid also builds other hardware components that can be plugged into the data center to enhance their product offering, including expansion chassis, NVMe SSD, and storage class memory.

Competition vs. Partnership with Big Cloud

The second question that I had prior to my interview with Bryan was how Liqid competes with the major cloud providers like AWS, Microsoft Azure, and GCP and what level of interest these players have in Liqid's market.

My intuition was that cloud providers are in the business of running some of the largest data centers in the world, so how is that they have not come up with workable composable infrastructure solutions of their own? And what interest would they have in selling these solutions to customers, similar to how AWS Outposts delivers physical AWS infrastructure to private data centers giving an AWS-like, yet colocated computing environment?

First, it's clear that the engineering team at Liqid has built something that has the attention of big cloud. Liqid already sells their solution to Amazon, Alibaba, and Facebook which of course all have large data center teams and huge hardware footprints. The Liqid team has met with engineering teams from these companies who are looking to understand how they can get such high utilization rates.

Also, Bryan shared an interesting perspective on why major cloud providers may not have come up with their own solutions for data center resource utilization. The budgets are so large at these major cloud providers (for AWS, very roughly $48B of spend in 20202), that they can throw money at problems and continue to scale up, rather than hunker down and squeeze every last drop of efficiency from existing hardware.

Software-Defined Everything

The trend that I notice with Liqid's product as with that of other companies I've written about is how software continues to infiltrate the hardware stack at lower and lower levels. Using software to define hardware composition provides flexibility and efficiency in multiple types of computing environments.

Over the summer, I wrote about how Cloudflare took software-defined networking to nth degree and built more efficient CDNs, serverless compute engines, and SASE networks. I also explored how Rubrik has brought flexible software to data backup and recovery and greatly reduced recovery times by doing so. Liqid is yet another example of software eating the world of commoditized hardware, and I'm excited to follow their progress in the months and years to come.

https://www.gartner.com/smarterwithgartner/gartner-predicts-the-future-of-cloud-and-edge-infrastructure/

https://www.cnbc.com/2021/10/28/aws-earnings-q3-2021.html